Training deep learning models is known to be a time consuming and technically involved task. But if you want to create Deep Learning models for Apple devices, it is super easy now with their new CreateML framework introduced at the WWDC 2018.

You do not have to be a Machine Learning expert to train and make your own deep learning based image classifier or an object detector. In this tutorial, we will see how to make a custom multi-class image classifier using CreateML in Xcode in minutes in macOS.

We need macOS Mojave and above(10.14+) and XCode 10.0+. If you would like to deploy the model to an iOS app, you would need iOS 12.0+.

Benefits of using CreateML

There are several benefits of using CreateML for image classification and object detection tasks.

- Ease of use: Apple has made it very easy for developers without machine learning experience to create models.

- Speed: CreateML uses hardware acceleration to significantly speed up training and inference times. You can train a dataset of a few hundred images in seconds and a few thousand images in minutes rather than multiple hours.

- Size: When you train a deep learning model on a GPU, you either use a network like Mobilenet or you use a larger network and apply pruning and quantization to reduce their size (MB) and make them run fast on mobile devices. These models can be a few megabytes to sometimes a hundred megabytes. That’s not cool if you want to use it in your mobile application. CreateML takes care of all those details under the hood and produces a model that is just a few kilobytes in size!

- Train once, use on all Apple devices: The model trained using CreateML can be integrated into iOS, macOS, tvOS and watchOS using CoreML.

Transfer Learning

You may have heard that a Neural Network is data hungry — it takes thousands, if not millions of data points to train one. How come CreateML requires only a few hundred images? The short answer is Transfer Learning.

In Transfer Learning, we use the architecture and weights of a pre-trained model which is usually trained on a large dataset for a different task. With this pre-trained model as the base, in Transfer Learning we change only the final output layers to suit the task at hand. We do not have to retrain the entire network for our own classification problem. This vastly speeds up the training process and also requires a much smaller dataset. For example, we could use a pre-trained ResNet model that has already been trained on a large dataset like ImageNet for 1000 categories using a dataset of more than a million images.

Apple uses this concept of transfer learning to let its users make custom deep learning based image.

Scene Print : Apple’s tiny pre-trained model

A pre-trained ResNet model — a popular architecture — when exported as a CoreML exported model file is about 90MB. Another popular model for mobile devices called SqueezeNet is around 5 MB.

In comparison, Apple’s own pre-trained model called scenePrint is just around 40KB!

How is that possible?

Psst… Apple is cheating! Well sorta. Remember, it owns the operating systems macOS, iOS, tvOS and watchOS, and the pre-trained model is bundled with the OS. Only the weights of the last layer need to be bundled with your app and that is why the size is tiny.

What about the accuracy?

The performance is slightly inferior to ResNet and slightly better than SqueezeNet. More importantly, it is better than human level accuracy! Apple provides a comparison of the same here.

Does it use the GPU?

This Vision model is already optimized for the hardware in Apple’s devices and uses GPU acceleration. It is available in macOS 10.14+ and Xcode 10.0+ SDKs.

Training a Custom Image Classifier using CreateML

Let’s see how to use the new scene print feature extractor model and train our own classifier in a MacBook Pro.

The experiments below are based on my mid-2015 MacBook Pro which has

- Processor: 2.5 GHz Intel Core i7 processor.

- GPU: AMD Radeon R9 M370X with 2048 MB VRAM.

You can see the GPU being used using the Activity monitor during training and inference.

That feels so good! Usually, because newer Macs do not have an NVIDIA GPU, we never train deep learning models on a Mac. But with CreateML, there is no need for a remote machine or cloud computing to do the heavy lifting GPU work to do some basic deep learning experiments!

The network in the model takes in images of size 299×299. So its advisable to use images of a size larger than that, otherwise the image would be upscaled before being fed to the network and it might lead to lower accuracy.

Dataset Preparation

In this post, we will show you how to build a multi-class classifier that can classify 10 different kinds of animals.

We will use the CalTech256 dataset. The dataset has 30,607 images categorized into 256 different labeled classes along with another ‘clutter’ class.

Training the whole dataset will take around 3 hours, so we will work on a subset of the dataset containing 10 animals – bear, chimp, giraffe, gorilla, llama, ostrich, porcupine, skunk, triceratops and zebra.

The number of images in these folders varies from 81(for skunk) to 212(for gorilla). We use the first 60 images in each of these categories for training and the rest for testing in our experiments below.

If you want to replicate the experiments, please follow the steps below

- Download the CalTech256 dataset

- Create two directories with names train and test.

- Create 10 sub-directories each inside the train and the test directories. The sub-directories should be named bear, chimp, giraffe, gorilla, llama, ostrich, porcupine, skunk, triceratops and zebra.

- Move the first 60 images for bear in the Caltech256 dataset to the directory train/bear, and repeat this for every animal.

- Copy the remaning images for bear (i.e. the ones not included in training set) to the directory test/bear. Repeat this for every animal.

Build and Analyze an Image Classifier in XCode

Now that our dataset is ready, we can follow the steps below to build an image classifier.

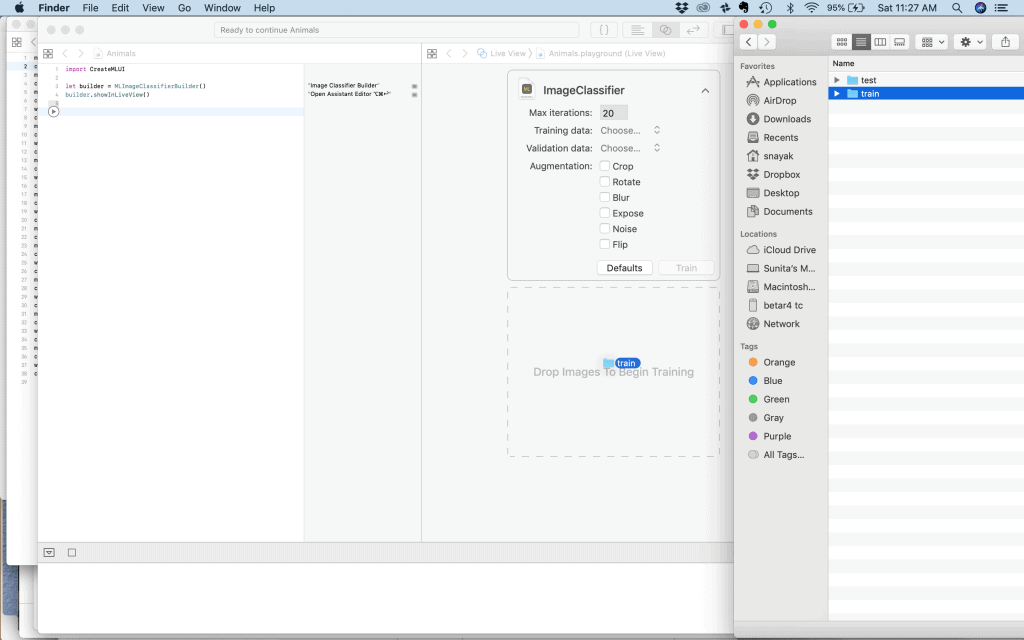

Step 1 : Open the Classifier Builder

Open Xcode (10.0+) and open a new playground using File->New->Playground. While doing so, choose a macOS Blank template.

Make sure the Assistant Editor is open in the right. Type the following in the main playground editor and hit Shift+Enter to run it.

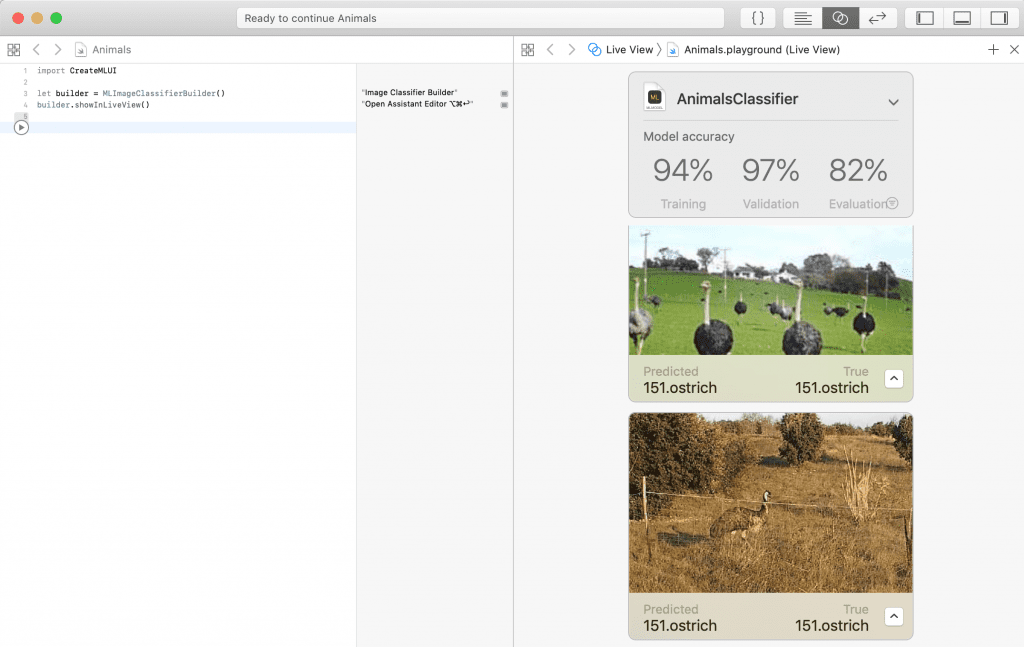

import CreateMLUI let builder = MLImageClassifierBuilder() builder.showInLiveView()

When this executes, the image classifier builder shows up in the right in the Assistant Editor.

Step 2 : Training

Click on the drop-down next to the ImageClassifier, and set the Max Iterations to 20. For smaller datasets, this might lead to overfitting, but for the size we are working on now, it should be fine. Then drag and drop the training folder train to the area labeled as ‘Drop Images to Begin Training’ under the ImageClassifier.

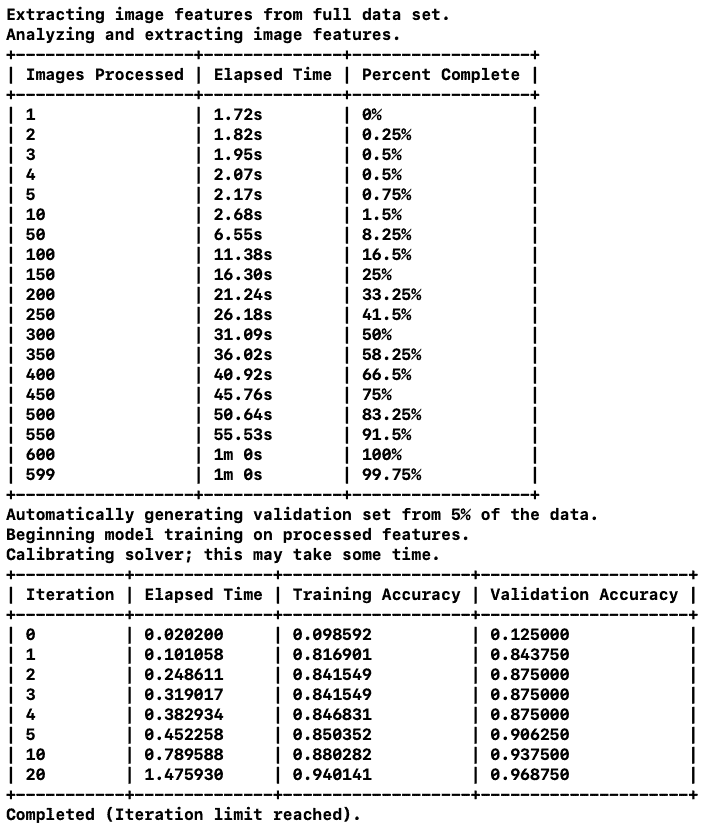

You will see that it would start training. It first extracts the feature vectors for all the images. This is the most time-consuming part of this process and it does use the GPU. The extracted vectors are then used in the training iterations to predict the class probability for each image. As the training is carried out, it prints out the time spent on training, the training accuracy and the validation accuracy after each iteration.

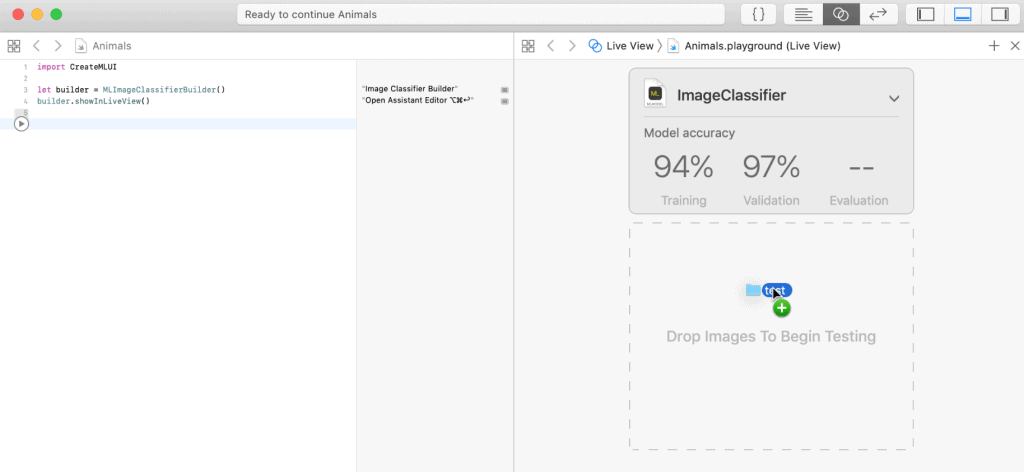

Step 3 : Testing

Next, we need to check the accuracy on our test images. Drag and drop the test image folder into the area labeled ‘Drop Images to Begin Testing’ in the Assistant Editor.

CreateML then extracts the feature vector for each of the training images and classifies them.

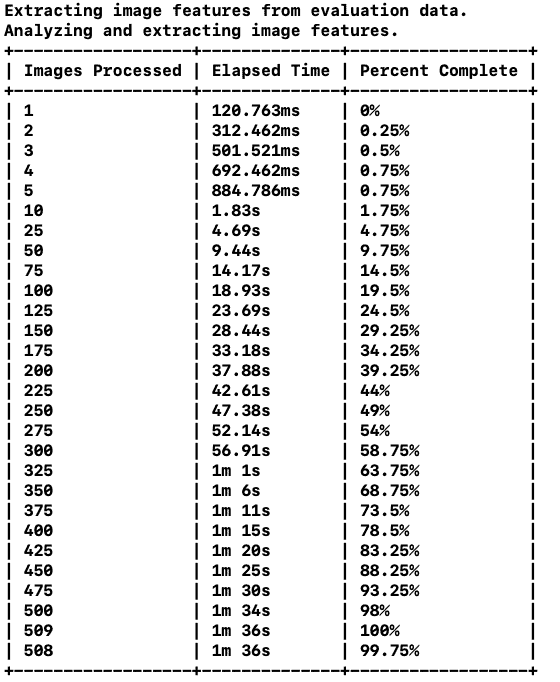

As we can see above, it processed 509 images in 1m 36s !

The final evaluation accuracy across all the test instances of all the 10 classes is 82% as shown in the Live view in the Assistant editor. It also shows the predicted and true class belonging to each test instance. You can scroll down the test instances to see the output for each of them.

Confusion Matrix

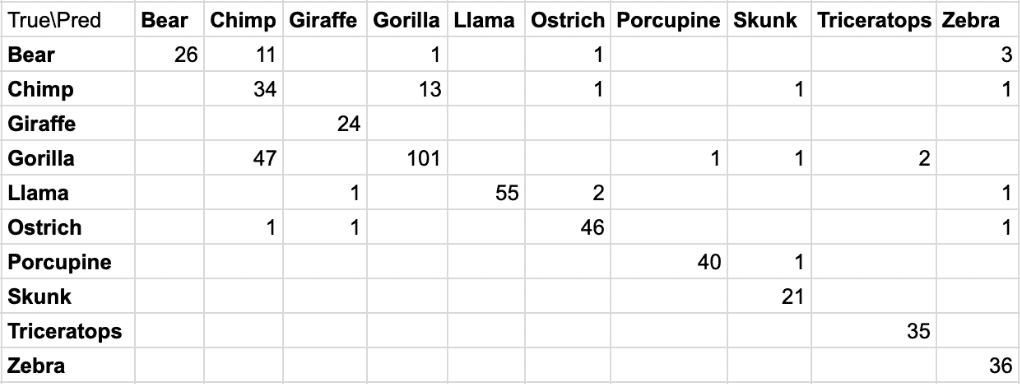

Confusion Matrix gives an idea of the performance of the classification model over various classes. An entry (i,j) in the confusion matrix shows how many instances of class i is predicted as belonging to class j. Ideally, all the non-zero numbers should be along the diagonal of the matrix, if there is zero error. Below is the confusion matrix in our evaluation.

As we can see the biggest confusion is classifying the gorilla as the chimp, which is kind of reasonable here as they are the closest animals in terms of appearance. Some chimps are also classified as gorillas, but there are more gorillas in the test set than chimps.

The next big one in the confusion matrix is that some of the bears are classified as chimps. This could be an indication that the classifier needs more images of bears.

Precision and Recall

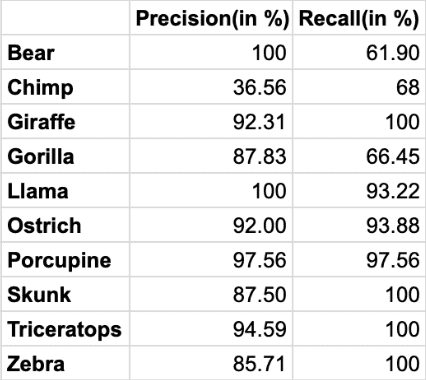

Precision for a class is the fraction of instances correctly predicted as belonging to the class over all the instances predicted as belonging to the class. If we see below, it is 100% for the llama and bear categories. So each test instance predicted to be llama is actually a llama, and each instance predicted as a bear is a bear too.

Recall is the fraction of the number of instances correctly predicted as belonging to the class over all the instances actually belonging to the class. In our example, we got a 100% recall for multiple categories – giraffe, skunk, triceratops and zebra. So all the test instances belonging to these categories got classified correctly.

Inference by Users

Apple’s CoreML framework lets the users do on-device inference using the models created using CreateML. This helps retain user’s privacy and does not need any internet connection as needed by the apps using web-based inference.

We will not go into the details of creating an app for inference by a user in this post, but Apple provides very good documentation of the same for building an iOS app using its Vision and CoreML models with sample code here.

You could also build an iOS app doing real-time image classification with ARKit. Its sample code is here

One thing to keep in mind while building your own app with a custom model is that the scenePrint model works best if the source of your training data matches the source of the images you want to classify. For example, if your app classifies images captured with an iPhone camera, train your model using images captured the same way, whenever possible.

References

Griffin, G. Holub, AD. Perona, P. The Caltech 256. Caltech Technical Report.

Introducing Create ML by Apple at WWDC 2018

Image Classifier User Guide

Subscribe & Download Code

If you liked this article, please subscribe to our newsletter. You will also receive a free Computer Vision Resource Guide. In our newsletter, we share OpenCV tutorials and examples written in C++/Python, and Computer Vision and Machine Learning algorithms and news.